The

Fiber Optic Update

Every

year is another busy year for fiber optics: new technology,

components, applications and usually a few surprises.

On this page we've gathered some of the more important

topics, covering new technology and applications that FOA believes every tech needs to

know. Many of these articles are from the FOA

monthly newsletter, which you can subscribe

to here.

We also recommend the FOA "Fiber

FAQs" page with tech questions from customers originally

printed in the FOA Newsletter. We get lots of interesting

questions at FOA.

This page is part of a Fiber

U Tech Update Course.

Sections: Technology Components Installation Testing Safety

Got

questions? Try the FOA

Guide and use the site search.

Technology

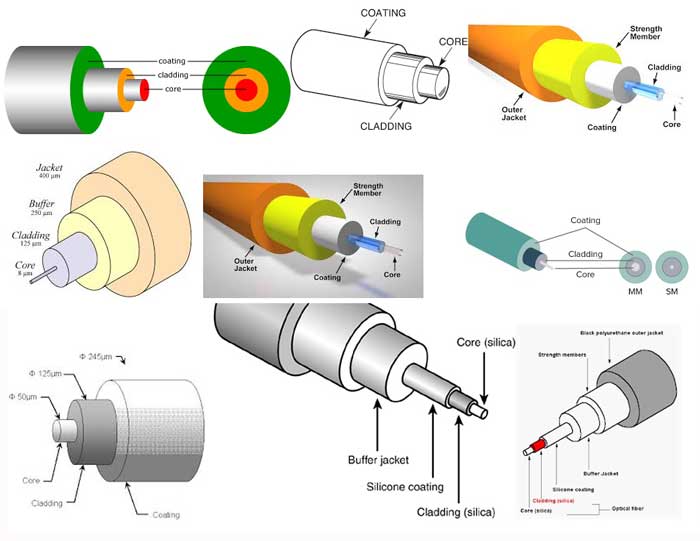

They've

ALL Got It All Wrong - And They Confuse A Lot Of People - YOU

CANNOT STRIP THE CLADDING OFF GLASS FIBER!!!

We

recently got this email from a student with field experience

taking a fiber optic class:""The instructors are telling us

that we are stripping the cladding from the core when prepping

to cleave MM and SM fiber. I learned from Lenny

Lightwave years ago, this is not correct. I do not want

to embarrass them, but I don't want my fellow techs to look

foolish when we graduate from this course."

I'll

share with you our answer to this student in a moment, but

first it seems important to understand where this

misinformation comes from. We did an image search on the

Internet for drawings of optical fiber. Here is what we found:

EVERY

fiber drawing we found on the Internet search with one exception

(which we will show in a second) showed the same thing - the

core of the fiber separate -sticking out of the cladding and the

cladding sticking out of the primary buffer coating. Those

drawings are not all from websites that you might expect some

technical inaccuracies, several were from fiber or other fiber

optic component manufacturers and one was from a company

specializing in highly technical fiber research equipment.

The only drawing we found that does not show the core separate

from the cladding was -

really! - on the FOA

Guide page on optical fiber.

No wonder everyone is confused. Practically every drawing shows

the core and cladding being separate elements in an optical

fiber.

So how did FOA help this student explain the facts to his

instructors? We thought about talking about how fiber is

manufactured by drawing fiber from a solid glass preform with

the same index profile as the final fiber. But we figured a

simpler way to explain the fiber core and cladding is one solid

piece of glass was to look at a completed connector or a fusion

splice.

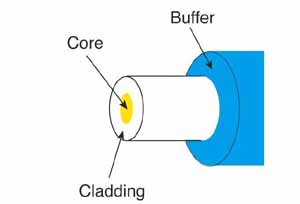

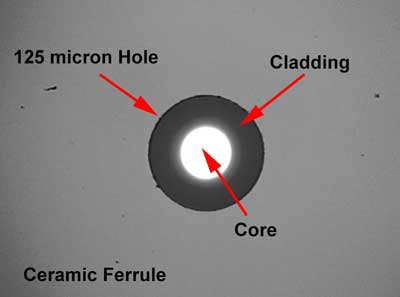

We started with a video microscope view of the end of a

connector being inspected for cleaning.

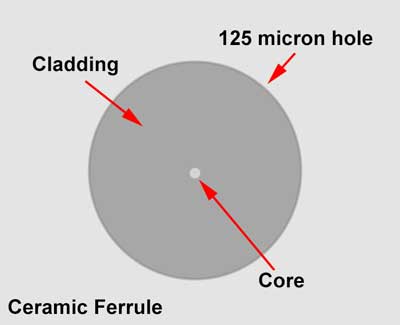

Here you can see the fiber in the ceramic ferrule. The hole of

the connector is ~125 microns diameter (usually a micron or two

bigger to allow the fiber to fit in the ferrule with some

adhesive easily.) The illuminated core shows how the cladding

traps light in the core but carries little or no light itself.

This does not look like the cladding was stripped, does it?

Here is the same view with a singlemode fiber at higher

magnification.

And no connector ferrules have 50, 62.5 or 9 micron holes so

that just the core would fit in the ferrule, do they?

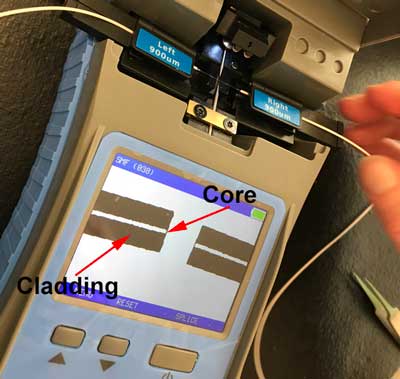

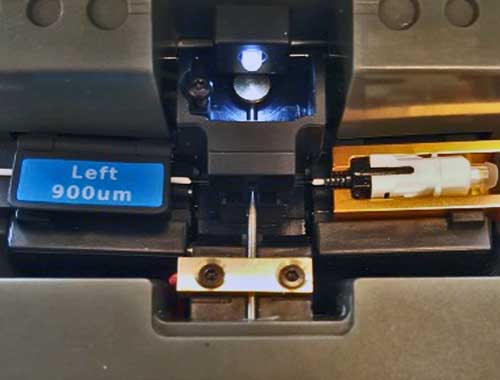

What about stripping fiber for fusion splicing. Here is the view

of fiber in an EasySplicer ready to splice.

What do you see in the EasySplicer screen? Isn't that the core

in the middle and the cladding around it? In fact, isn't this a

"cladding alignment" splicer?

We rest our case. If that's not sufficient to convince everyone

that you do not strip the cladding when preparing fiber for

termination or splicing, we're not sure what is.

Special Request: To everyone in the fiber optic industry

who has a website with a drawing on it that shows the core

of optical fiber separate from the cladding, can you please

change the drawing or at the very least add a few words to

tell readers that in glass optical fiber the core and

cladding are all part of one strand of glass and when you

strip fiber, you strip the primary buffer coating down to

the 125 micron OD of the cladding?

Bottom Line:

- Most

diagrams of fiber construction are wrong - showing core

and cladding as separate - but they are one solid piece of

glass.

- You

cannot strip the cladding from glass fibers.

Connector

Loss For Splice-On Connectors

FOA

received a call from a contractor working on a network. His

subcontractor doing termination presented data on terminations

using mechanical splice-on connectors where he claimed the TIA

standard for these connectors was 0.75dB for the connector

PLUS 0.3dB for the splice, for a total of 1.05dB. He wanted to

know if this were true.

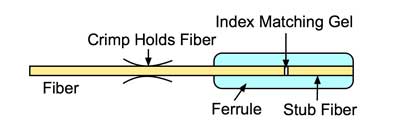

No, it

is not true. These connectors have an internal splice to a

stub fiber already glued in the ferrule and factory polished.

The loss of the connector used to terminate a fiber must

include the splice since it is the termination method and

there is no way to test it separately from the connector

itself.

Typical

mechanical splice-on connector, also called a prepolished/splice

connector.

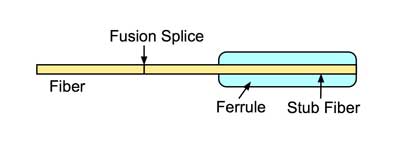

Afusion splice-on connector has a stub fiber that's fusion spliced to the fiber being terminated.

We noted the TIA loss value, 0.75dB was very high compared to

adhesive polish connectors which average around 0.3dB loss when

tested against a reference connector. In the standards it has

remained at 0.75dB to cover this type of connector and array

connectors like the MPO.

Another question often asked about these types of connectors as well as

spliced-on pigtails for termination is can you differentiate the loss of

the connector from the splice loss in an OTDR.The answer is generally

no, the resolution of the OTDR is not adequate to distinguish the two

losses, unless the pigtail is very long. The splices in a fusion or

mechanical splice-on connector are much too close for OTDRs to see both

events.

Cable

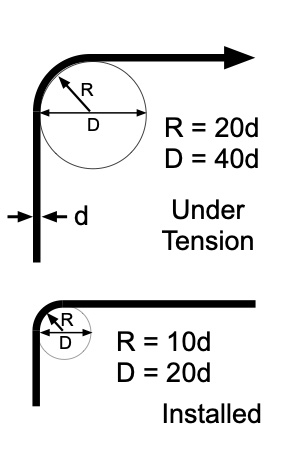

Bend Diameter (Radius)

All

fiber optic cables have specifications that must not be

exceeded during installation to prevent irreparable damage

to the cable. This includes pulling tension, minimum bend

diameter or radius and crush loads. Installers must understand these

specifications and know how to pull cables without damaging

them.

The normal

recommendation for fiber optic cable bend radius is the minimum

bend radius under tension during pulling is 20 times the

diameter of the cable. When not under tension, e.g. cable stored

in service loops, the minimum recommended long term bend

radius is 10 times the cable diameter.

Note: Always check the cable specifications for cables you

are installing as some cables such as the high fiber count

cables have different bend radius specifications from

regular cables!

And also note that some manufacturers are now quoting "bend

diameter" instead of or in addition to bend radius. Bend

diameter is more relevant when dealing with service loops or

storage loops, while bend radius is more aimed at bending

cable around corners. Remember the diameter is twice the

radius of a circle, so the minimum bend diameter of a

cable under pulling tension, e.g. the diameter of a

capstan used in pulling cables, would be 40 times the

diameter of the cable and the storage loop minimum diameter

would be 20 times the diameter of the cable.

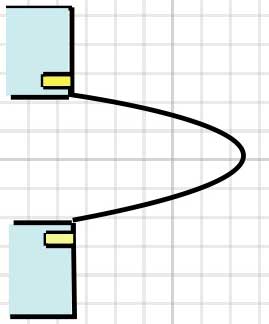

Under

tension (top) and after pulling (bottom)

Bend radius example: A cable 13mm (0.5") diameter would have a

minimum bend radius under tension of 20 X 13mm = 260mm (20 x

0.5" = 10") That means if you are pulling this cable over a

pulley, that pulley should have a minimum radius of 260mm/10" or

a diameter of 520mm/20" - don't get radius and diameter mixed

up!

Why

is it important? Not following bend radius guidelines can lead

to cable damage. If the cable is damaged in installation, the

manufacturer's warranty is voided. Here is what one

manufacturer's warranty says: "This

warranty does not apply to normal wear and tear or damage

caused by negligence, lack

of maintenance, accident, abnormal operation, improper

installation or service, unauthorized repair, fire,

floods, and acts of God." And their specifications call

our the minimum bend radius as "20 X OD-Installation, 10 X

OD-In-Service."

And

When An Installer Gets it Wrong

There are two problems here, one visible and one hidden.

The visible one is the pulley mounted on the side of the

truck used to change the direction of the cable to allow

using the capstan mounted on the rear of the truck. The

cable is being bent about 120 degrees over a pulley that

appears to be about 120mm (5 inches) diameter. That's a

radius of 60mm or 2.5 inches. That pulley looks like a

stringing block uses for stringing ropes when pulling in

power lines.

We believe the cable was a 864 fiber ribbon cable with a

diameter of 24mm (0.92") with a minimum bend radius of

360mm or 14". That means the pulley the cable is

being pulled over is ~1/6th the size it should be - shown

by the dotted red circle above.

The second problem is the angle of the cable coming out of

the manhole. It is exiting a conduit and being pulled

almost straight up out of the manhole. If there is no

hardware in the manhole, the cable is being pulled over an

edge exiting the conduit or the manhole, bending with a

very, very small radius.

One

can only speculate about the possible damage to a

cable when treated like this. What comes to mind

first is broken fibers, and that is a possibility.

But bending this tightly can also damage the cable

structure, including the fiberglass stiffeners,

strength members and jacket. Compromising the

integrity of the cable reduces its protection for

the fibers. Even the fiber ribbons can be

delaminated and fibers put under stress. A cable

pulled under these circumstances can have damage

along the entire length, not just a point where it

was kinked.

What should have been done on this pull? The

120mm/5" pulley should have been replaced with one

at least 6 times larger. The truck could have been

further from the manhole (and maybe turned to be

inline with the pull) so the angle of the cable

exiting the conduit was less. Hardware should be

attached to the conduit to provide a proper bend

radius for the cable as it exited the conduit and

the cable should have been protected if it contacted

the edges of the manhole..

Bottom

Line

- All

cables have specifications for minimum bend radius

- Violating

this spec may permanently damage the cable

- Bend

radius is generally 20X cable diameter under tension - 10X

after installation

Read

more about bend radius.

Optical

Loss: Are You Positive It’s Positive?

Update

7/2020: Mystery solved! Investigations into ISO standards

showed the international standards committees changed the

definition of loss in a way that changes the sign for loss

but makes it violate all scientific convention on the use of

dB. This is documented below.

A

recent post on a company’s

blog and

article on the CI&M website discussed the topic of the

polarity (meaning “+” or “-“ numbers) of measurements of optical

loss, claiming loss was a positive number. The implication was

that some people failed fourth grade math and did not understand

positive and negative numbers. The claim is that insertion loss

is always a positive number.

Is that right?

The asnwer is no - loss is a negative number, but instruments

that only measure loss - OLTS and OTDRs - display loss as a

positive number.

Suppose we set up a test. Let's measure power out of a cable

with a power meter and then attenuate the power by stressing the

cable. What happens?

FOA

created this short movie on the FOA

Guide page explaining dB showing how a power meter shows

loss when a cable is stressed to induce loss:

As the fiber is stressed, inducing loss, the power level goes

from -20.0 dBm to --22.3 dBm.That's a more negative number.

(-22.3dB) - (-20.0dB) = -2.3dB That's basically 4th grade

math.

No question – loss means a more negative power reading in dB

and a negative number in dB indicates loss.

If you want to calculate this yourself, FOA

has a XLS spreadsheet you can download that will

calculate the equations for optical power for you.

But if you are a manufacturer of fiber optic test instruments

that offers optical power meters and sources to test loss, why

would this confuse you? Well, it seems they think when

we talk about loss, we do not give it a "+ or -" sign, we just

say loss, so they just display it as a number without sign,

Note:

In IEC (and TIA documents adopted from IEC documents, the

definition of attenuation in Sec. 3.1 is written to have

attenuation calculated based on

Power(reference)/Power (after attenuation). This

definition leads to attenuation being a positive number as

it is normally displayed by an OLTS or OTDR. However if

one uses a fiber optic power meter calibrated in

dBm, the result will be a negative number, since dBm is

defined as Power(measured)/Power(1mw) (see FOTP-95, Sec.

6.2). If dBm were defined in this manner, power levels

below 1mW would be positive numbers, not negative as they

are now, and power levels above 1mW would be negative!

Bottom Line: Confusion

- Loss

in dB is a negative number

- Instruments

that measure loss do not display negative signs with

loss

- Gains

are displayed with a negative sign

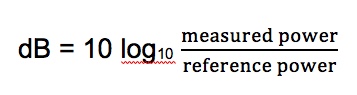

dB

or dBm -Still Confusing 4/2020 -

The

second most missed question on FOA/Fiber U online tests

concerns dB, that strange logarithmic method we use to measure

power in fiber optics (and radio and electronics and acoustics

and more...). We've covered the topic several times in our

Newsletter but there still seems to be confusion. So we're

going to give you a clue to the answers and hopefully help you

understand dB better.

These are all correct statements with the percentage

of test takers who know the answer is correct.

The most answered correctly: dBm is absolute power

relative to 1mw of power (78.8% correct. Does "absolute"

confuse people? It's just "power" but absolute in contrast

to "relative power" which is loss or gain measured in dB.)

This one is answered correctly less than half the time: dBm

is absolute power like the output of a transmitter. (41.5%

correct, see comment above.)

This one does often get answered correctly: The difference

between 2 measurements in dBm is expressed in dB. (23.8%

correct)

Here is an example of a power meter measuring in dBm and

microwatts (a microwatt is 1/1000th of a milliwatt.)

Here

is an example

of the

conversion of

watts to dBm.

This meter is

reading

25microwatts -

that's

0.025milliwatts.

If we convert

to dBm, it

becomes

-16.0dBm. We

can easily

figure this

out using dB

power ratios.

-10dBm is 1/10

of a milliwatt

or 0.100mW.

-6dB below

that is a

factor of 0.25

so 0.1mW X

0.25 = 0.025mW

or

25microwatts.

The other way

to figure it

is -10dB is

1/10 and -6dB

is 0.25 or

1/4th

(remember 3dB

= 1/2, so 6dB

= 3dB + 3dB =

1/2 X

1/2 = 1/4) so

-16dBm is

1/40milliwatt

or

0.025milliwatts

or

25microwatts.

Read a more

comprehensive explanation of dB here in the FOA Guide.

What's

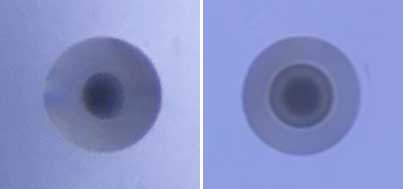

That Fiber?

A FOA Newsletter reader

sent FOA these microscope photos of two MM (multimode) fibers,

asking what was the difference with the one on the right. It is

a bend-insensitive (BI) fiber and compared to the regular

graded-index MM fiber you readily notice the index "trench"

around the core that reflects light lost in stress bends right

back into the core. You can read

more about bend-insensitive fiber in the FOA Guide.

What

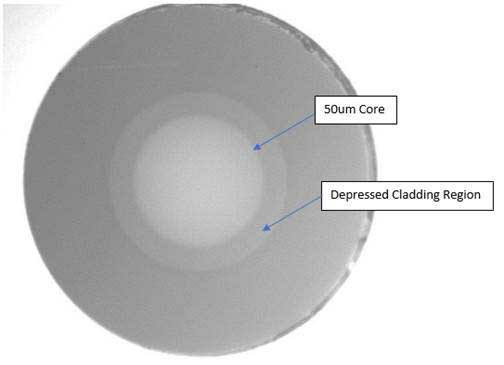

does Bend-Insensitive Fiber Look Like?

While

researching the answers to the question above, we talked to Phil

Irwin at Panduit. He mentioned that you could see the structure

of BI fiber and sent along this photo:

At the left, you can see the gray area surrounding the core,

shown in the drawing in the right as the yellow depressed

cladding region.

If you want to try to see it yourself, it's not easy. Phil tells

us that OFS fiber is the easiest to see, Corning a bit more

difficult. You need a good video microscope. You may need to

vary the lighting and illuminate the core with low level light.

Today most multimode (MM) fibers are bend insensitive fibers. If

you buy a MM cable or patchcord, it is probably made with

bend-insensitive fibers. That's generally good because thee

fibers are less sensitive to bending or stress losses which can

cause attenuation in regular fibers.

Many singlemode fibers are bend-insensitive also, especially

those used with smaller coatings to pack more fibers into

microcables or high fiber count cables.

The

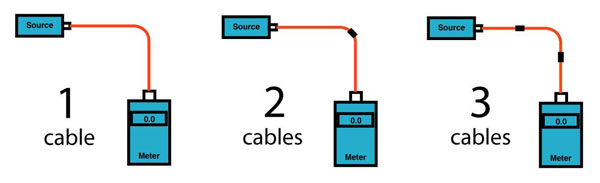

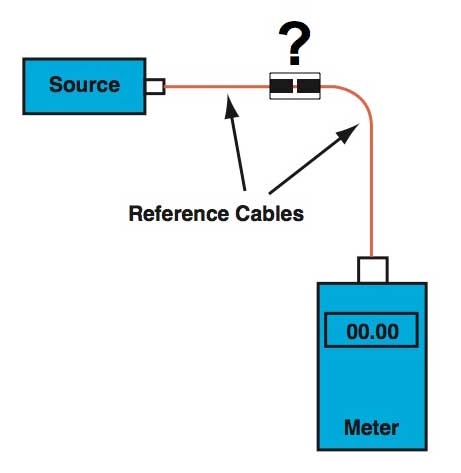

Perils Of 2-Cable Referencing

FOA

received an inquiry about fluctuations in insertion loss

testing. The installer was using a two cable reference

method for setting a "0dB" reference where you attach one

reference cable to the source, another to the meter and

connect them to set the "0dB" reference. The 2-cable

reference method is allowed by most insertion loss testing

standards, along with the 1- and 3- cable reference

methods, although each gives a different loss value.

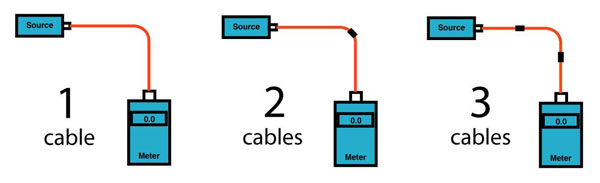

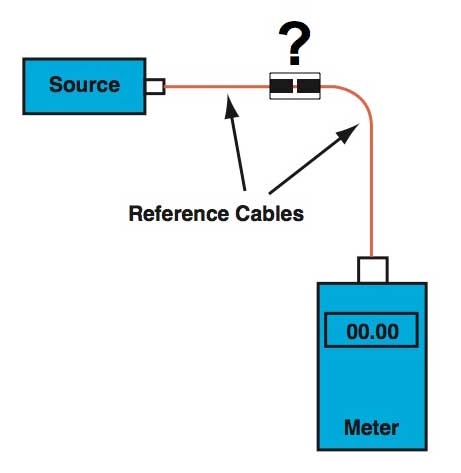

3 different ways to set a 0dB reference for loss testing

When a 1-cable reference is used, one sets a reference

value at the output of the launch cable and measures the

total loss. With a 2-cable reference, a connection between

the launch and receive reference cables is included in

making the reference, so the loss value measured will be

lower by the amount of that connection loss. The 3-cable

reference includes two connection losses so the loss will

be lower still.

The problem with the two cable reference is the

uncertainty added by including the connection between the

two reference cables when setting the "0dB"

reference.

Unless you carefully inspect and clean the two connectors

and check the loss of that connection before setting the "0dB"

reference, you add a large amount of uncertainty to

measurements of loss. The best way to use a 2-cable

reference is to set up the source and reference cable

(with inspected and cleaned connectors), measure the

output of the launch cable, attach the receive cable (with

inspected and cleaned connectors) and measure the loss of

the connection before setting the "0dB"

reference. If the connection loss is not less than 0.5dB,

you have connectors that should not be used for testing

other cables. Find better reference cables.

The two cable reference is often used when the connectors

on the cables or cable plant being tested are not

compatible with the connectors on the test equipment, so

you must use hybrid launch and receive cables. Then you

can only reference the cable when connected to each other.

In that case, you need the 2-cable reference but should

expect lower loss and higher measurement uncertainty.

Experiments have shown that the uncertainty with a 1-cable

reference is around +/-0.05dB while the 2-cable has an

uncertainty of around +/-0.2 to 0.25dB caused by the

mating connection between the two reference cables. Those

experiments also showed the uncertainty of the 3-cable

reference was not significantly larger than the 2-cable

reference.

When possible, use a 1-cable reference. When you must use

the 2- or 3-cable reference, inspect and clean all

connectors carefully before making connections for the

reference or test.

Bottom Line:

- The

value of loss you measure depends on how you set

your "0dB" reference - more reference cables means

less loss.

- Connections

between reference cables when setting a 0dB loss add

uncertainty to measurements

Troubleshooting

With A VFL: Fibers

Damaged In Splice Trays

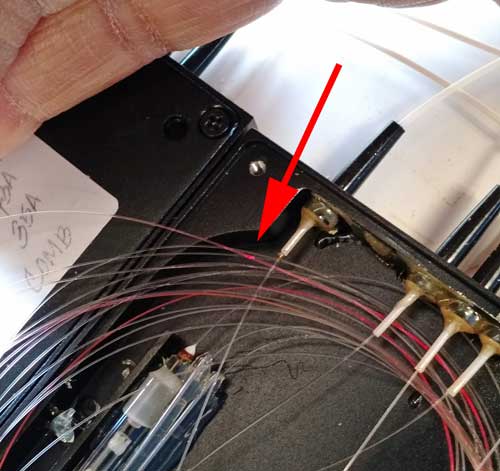

Is this

a trend? Twice in one week, we have inquiries from readers

with problems and both were traced to fibers cracked when

inserted in splice trays. The photo below shows one of them

illuminated with a VFL. This was the same issue we found in

the first field trial of a VFL more than 30 years ago that led

to its popularity in field troubleshooting.

Photo courtesy

Alan Kojima.

Bottom

LIne:

- VFLs

are invaluable troubleshooting tools for finding cable

faults

- But

only work close by - 3-4km range max

How

"Fast" Is Fiber?

We've

probably all heard the comment that fiber optics sends

signals at the speed of light. But have you ever thought

about what that speed really is? The speed of light most

people think about is C = speed of light in a vacuum =

300,000 km/s = 186,000 miles/sec. But in glass, the speed is

reduced by about 1/3 caused by the material in the glass.

The light is slowed down and the amount is defined as the

index of refraction of the glass. V=

speed of light in a fiber = c/index of refraction of

fiber

(~1.46) = 205,000 km/s or 127,000 miles/sec. So in

glass,

the "speed of light" is about 2/3 C, the speed of

light in a

vacuum. And the difference in speed in

different materials is what makes fiber work - causing "total internal

refraction".

One

of the FOA instructors sent us this question: "I work

with at Washington Univ with an engineer who works for an

electrical utility. He asked a question about the speed of

signal transmission over fiber optics, single mode, at top of

towers. They need signal to be sent in 18 millisecs for relays

to function properly. Is there a problem over a distance of

150 miles?"

Let’s do a calculation:

C = speed of light in a vacuum = 300,000 km/s = 186,000

miles/sec

V= speed of light in a fiber = c/index of refraction of fiber

(~1.46) = 205,000 km/s or 127,000 miles/sec

150 miles / 127,000 miles/sec = 0.00118 seconds or ~1.2

milliseconds

Another way to look at it is 127,000 miles/sec X 0.018 seconds

(18ms) = 2,286 miles

So the fiber transit time is not an issue. The electronics

conversion times might be larger than that.

I used to explain to classes that light travels about this fast:

300,000 km / sec

300 km / millisecond

0.3km /microsecond or 300m / microsecond

0.3 m per nanosecond - so in a billionth of a second, light

travels about 30cm or 12 inches

Since it travels slower by the ration of the index of

refraction, 1.46, that becomes about 20cm or 8 inches per

nanosecond.

That is useful to know since an OTDR pulse 10ns wide translates

to about 200cm or 2 m pr 80 inches (6 feet and 8 inches), giving

you an idea of the pulse width in distance in the fiber or an

idea of the best resolution of the OTDR with that pulse

width.

Bottom Line

- Fiber

is "fast" because of its bandwidth capability

- Light

travels in fiber at the speed of light

- But

the speed of light in glass is only 2/3 as fast as the

speed of light in air or a vacuum

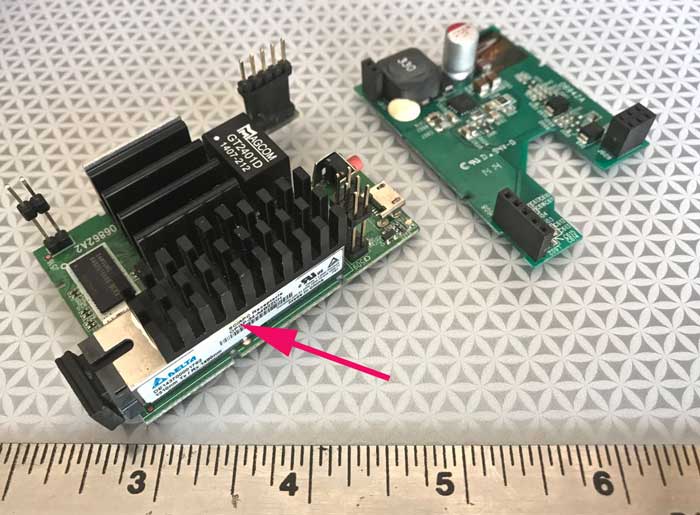

What

Does A FTTH ONT Look Like Today?

That's all there is to the ONT that goes into the home. The

arrow points to the 1310 TX/1490 RX transceiver for SC-APC

connectors.

Note: That device is now priced at $10-20US. The incredibly

high volume of FTTH components has drive costs of these

devices down to incredibly low levels. Today media converters

for SM fiber are as priced the same as MM. Multimode fiber is

beginning to look obsolete.

Components

Here are

several technologies that have continued growing in importance

in the fiber optic marketplace - components that

every tech needs to learn about and become familiar with their

use.

How

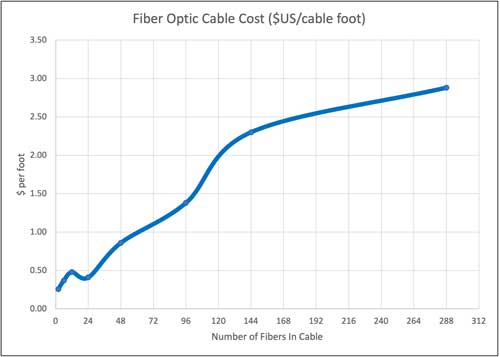

Many Fibers? - What's The Optimal Cable Size?

The idea

often arises to reduce the number of fibers in a cable and

therefore reduce the cable cost, assumed to be important on long

cable runs. But is the cost of fiber such a big part of the cost

of the cable plant? We decided to analyze cable costs for

standard loose tube cable capable of being pulled into conduit

for underground or lashed to a messenger for aerial

installation.

Gathering data was not easy, but we found several large,

reputable US distributors who listed prices for several types of

loose tube singlemode OSP cables from top cable makers. All

prices are for small quantities (km, not 10s or 100s of

km). Prices are how they were quoted, in $US per foot, so

our readers outside the US should feel free to convert into

another currency and meters.

This graph shows what we found:

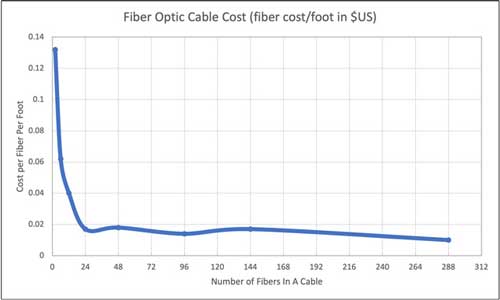

The curve

looks reasonable above 24 fibers, but unpredictable below that,

so we analyzed the data by cost per fiber per foot and got the

graph below.

The cost per fiber per foot increases rapidly below 24 fibers,

probably because the cost of making cable doesn't change much

with fewer fibers; it's the cost of the plastics, strength

members and manufacturing process that dominates the cost.

However, after 24 fibers, the cost settles down and slowly

decreases for higher fiber counts, reflecting then the cost of

the added fibers.

Another way to think of this is that below 24 fibers, you are

paying for the cable; above 24 fibers you pay for the fibers.

The thing to note of course is the cost of each fiber is less

than 2 cents per foot for any cable above 24 fibers. When OSP

construction costs are $5-25 or more per foot, the cost of fiber

seems to be quite cheap. Certainly installing cable with

additional fibers is very cost effective if it means having

fibers to expand the network without having to install another

cable. And, of course, that applies to urban and suburban

networks, not just rural.

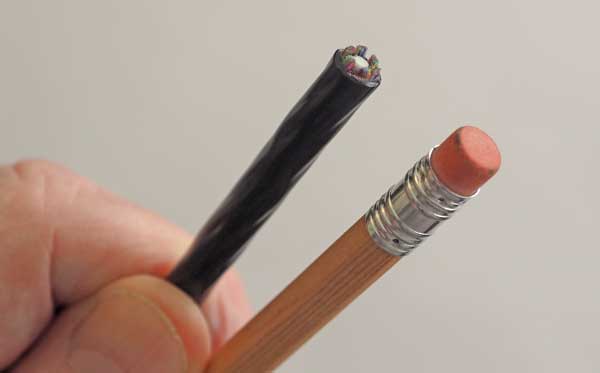

Micros:

Microcables, Microducts and Microtrenching

144

fiber

Corning

MiniXtend cable is smaller than a pencil

MIcrocables,

microducts and microtrenching - three technologies that have

more in common than the prefix "micro" are gaining in

acceptance along with blown cable, the obvious method of

installation using them. Smaller is always better when it

comes to crowded ducts, especially in cities where duct

congestion is a problem in practically every city we have

contact with.

Bottom Line:

- Like

everything else, cables keep getting smaller

- Work

well with microducts and microtrenching

- Installers

need to become familiar with "blown cable" technology

- They

are already accepted in the marketplace

Fiber

Ducts

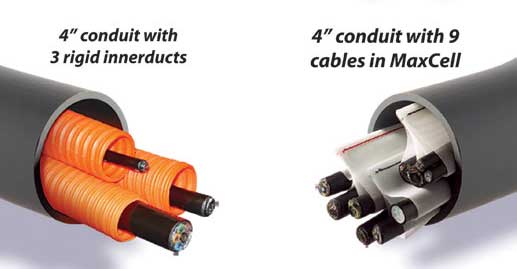

With the

demand for more fiber for smart cities services like small

cells and smart traffic signals, not to mention a ton of other

smart cities services, installing more cables in current ducts

- without digging up streets - is a major interest. Sometimes

it's possible to install microducts in current ducts with a

cable and blow in a new microcable. Sometimes it's worth it to

pull an older cable out and install a new microduct that will

accommodate 6 cables, making room for future expansion. The

makers of the fabric ducts, Maxcell, can even show you how to

remove the ducts in conduit without disturbing the current

cables and pull in fabric ducts to install more cable.

Comparison of MaxCell ducts to rigid plastic duct

Microducts are small ducts for blowing in cable. In the size of

a traditional fiber duct, you can get 6 microducts for 6 288

fiber cables.

Microducts And Microtrenching

Nearly invisible microtrenching

If you

have to trench, microtrenching is probably the best choice for

cities and suburbs. Rather than digging wide trenches or using

directional boring (remember the story about the contractor in

Nashville, TN using boring to install fiber who punctured 7

water mains in 6 months?), microtrenching is cheaper, faster

and much less disruptive.

All of

this implies that contractors are willing to invest in new

machinery and training, sometimes an optimistic assumption.

Microtrenching machines and cable blowing machines are

available for rent, but personnel must be trained in the

design of networks using these technologies and operating the

actual machinery in the field. That's still a considerable

investment.

Bottom

Line:

- Cables

and ducts are getting smaller allowing more and more

fibers in the same space

- Microtrenching

allows "construction without disruption"

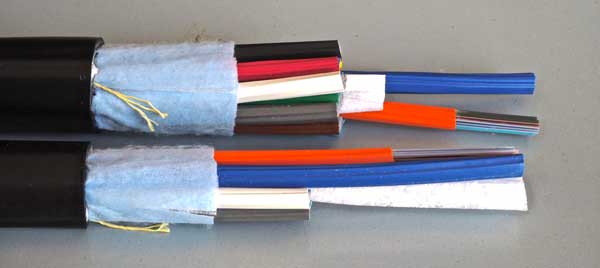

High

Fiber Count Cables

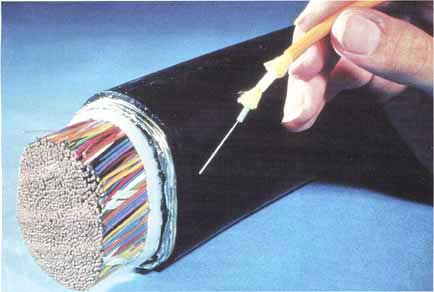

More manufacturers are introducing high fiber count cables -

864, 1728, 3456 or even 6,912 fibers. Like this one from

Prysmian with 1728 fibers: The applications were first in

large-scale data centers but are also seeing use in dense

urban centers to support FTTH and cellular small cell systems.

These cables use bend-insensitive fibers to allow high density

of fibers without worrying about crushing loads affecting

attenuation. Most also use fibers with 200 micron buffer

coatings instead of 250 micron

buffer coatings to allow even higher density. Many, or even

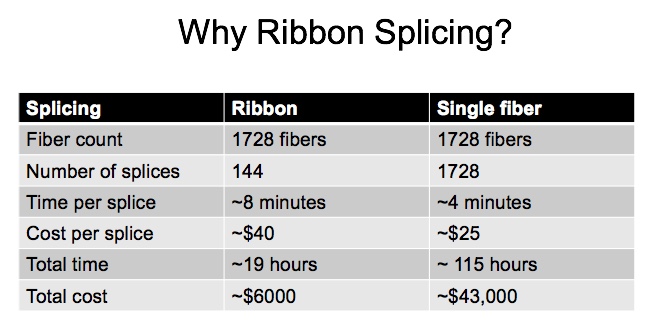

most, use ribbons of fiber, either the conventional hard

ribbons or the newer flexible ribbons, since, as we show

below, the time to splice even a 1728 fiber cable is

extremely long unless ribbon splicing is used.

High Fiber Count Cables may not be for everyone. Maybe only

for a very few. A single cable that has as many fibers as

12-144 fiber cables (1728 fibers) in a cable with a diameter

of only twice that of a conventional 144 fiber cable can

present challenges.

- First

of all, the cost - it's high. You do not want to waste cable

at this price. Engineering the cable length precisely will

save lots of money.And it's worse for higher fiber counts.

- Likewise,

making mistakes when preparing the cable for termination can

be expensive.

- The

cable may require special preparation procedures to separate

fibers for termination. Most use new methods of identifying

cables and bundles.

- Besides

skill, the tech working with high fiber count cables needs

lots of patience.

- Splicing

multiple cables at a joint can get complicated keeping all

fibers straight.

- These

cables will generally use 200 micron buffered fiber and

often a flexible ribbon instead of a typical rigid ribbon

structure to reduce fiber sizes. This may complicate

splicing as the methodology to splice the flexible fibers

and splice 200 micron fibers to regular 250 micron fibers is

a work in progress.

- Splicing

200 to 250 micron fibers may be easier with the flexible

ribbon designs which make it easier to spread fibers to the

same spacing.

- We've

heard the splicing time for flexible ribbons is about 50-100%

longer than that of conventional rigid ribbons. So

if you use that table below, you may need to increase your

ribbon splicing estimates when working with flexible

ribbons.

We've been looking for directions on how to deal with high fiber

count cables. Several contractors tell us ribbon splicing is the

way to go, and most of these cables now use a version of the new

ribbon types that are flexible. We've put together this

table from some articles on splicing ribbons:

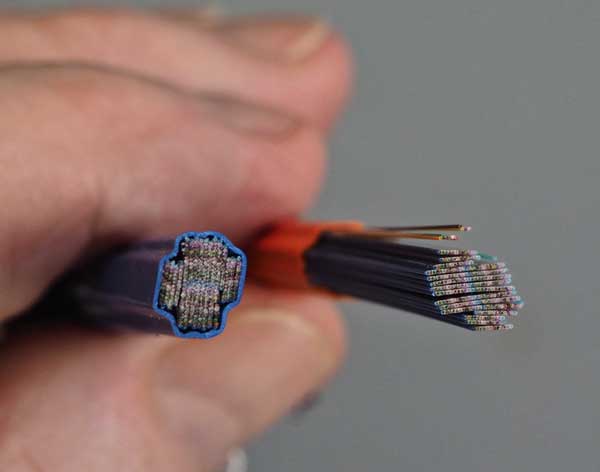

Corning

generously sent FOA some samples of 1728 and 3456 "RocketRibbonTM"

cable. We took some photos and must admit that these cables are

fascinating updates on the traditional fiber optic cables.

Here are Corning RocketRibbon 1728 fiber (bottom) and 3456 fiber

(top) cables. To get an idea of these cables size, look at this

photo:

The 3456

fiber cable is 32mm diameter, 1.3 inches. The 1728 fiber cable

is 25mm, 1 inch diameter.

These are cables made from conventional "hard" ribbons, not the

"flexible" ribbons used on some cable designs. As a result of

using hard ribbons, the fibers are arranged in regular patterns

to get high density.

These are

the tubes of ribbons from these cables. Each of those tubes of

ribbons has the equivalent of 24 ribbons of 12 fibers each

(actually 8 X 12 fibers and 8 by 24 fibers stacked up) for 288

fibers total. The 1728 fiber cable has 6 tubes and a center foam

spacer, with 144 ribbons total. The 3456 fiber version has 12

tubes and no spacers, 288 fiber ribbons total.

What amazes us is the density of fibers.

We calculated the "fiber density" of this 3456 fiber cable based

on 200 micron buffered fibers and determined that 54% of the

cable is fiber. Compare that to a typical 144 fiber loose tube

cable, which is about 14% fiber or a 144 fiber microcable which

is about 36% fiber.

Note: We're hearing rumors that the new high fiber

cables are getting fibers broken

during installation with the possible cause(s) being exceeding bend

radius or pulling tension, using improper installation equipment or

maybe even the cable designs. We're investigating this and will report

back in the near future. But please ensure installers follow

manufacturer's recommendations carefully.

Note: From a reliable source within the industry: Within a couple of years, the old inflexible

hard ribbon cables will be extinct. Everything will be flexible ribbons

and mostly 200 micron fibers and BI (bend insensitive) fibers (G.657). Besides

changing how these cables are handled, one thing will be lost - the

ability to print ID info on the ribbons so matching fibers to splice

will be more difficult.

Looking at the end of this cable reminded us of nothing so much

as this PR photo from AT&T from their introduction of fiber optics in

1976:

Not the fiber, the dense cable of copper pairs!

Of course the cable is much lighter than copper but much heaver

than you are used to with fiber - it weighs 752 kg/km or about

1/2 pound per foot. And it's stiff. Very stiff. The minimum bend

radius is 15 times the cable diameter or 480mm (~19 inches),

about a meter or yard in diameter.

As we noted in the photo above, Ian Gordon Fudge of FIBERDK

taught some data center techs how to handle a 1728 fiber

Sumitomo cable with a slotted core. Ian sent FOA this photo to

illustrate the number of fibers in the cable he was using for

training. Impressive!

Here is the slotted core that separates the flexible fiber

ribbons

in the Sumitomo cable:

More on high

fiber count cables and our continuing coverage.

High fiber count cables are all ribbon cables, some with hard

ribbons and some with flexible ribbons, All require ribbon

splicing because of the construction and the time it would take

to terminate them. This is a table of estimated termination

times. Is that realistic? We've heard the flexible ribbons may

take 50-100%

longer than conventional ribbons due to the need to

carefully arrange and handle fibers.

High

Fiber Count Cables - Continued Updates - Installation

Continuing

our ongoing research on high fiber count cables, last month we

were invited to visit Corning's OSP test and training facility

to experience the processes of installing these cables for

ourselves. We had the opportunity to handle some of these cables

ourselves and see how experienced techs worked with this cable.

Once you get a chance to handle this cable and see how big,

stiff and heavy it really is, you understand that it's quite

different from any fiber optic cable you have worked with, with

the possible exception of some hefty 144/288 fiber loose tube

cable that's armored and double jacketed. With a bend radius of

15X the diameter of the cable, the minimum bend radius of a 1728

fiber cable is 15" (375mm) and that's a 30" (750mm - 3/4 of a

meter) diameter. Just the reel it's shipped on is outsized - it

should have a ~750mm (30 inch) core and will be probably ~1.8m

(6 feet ) in overall diameter. 3300 feet (1km) of this cable

will weigh 550-750kg (1200-1700 pounds.) and the reel will weigh

another ~300-400kg (700-900 pounds). Will that fit on your

loading dock? Can you handle a ton of cable? (Metric or English)

I tried bending one of the 1728 fiber cables and (with the

manufacturer’s OK) tried to break it. The 1728 fiber cable I was

bending took an enormous amount of muscle to bend, and when I

got down to about an 8 inch radius, it broke, with a sound like

a tree limb of similar diameter cracking. In the field, that

would have been an expensive incident.

The stiffness of these cables affects the choice of other

components and hardware. You will not fit service loops into a

typical handhole, you need a large vault like the one shown in

the photos taken at Corning. You will also need close to 100

feet (30m) of cable for a service loop. You may need to figure 8

the cable on an intermediate pull and that will require lots of

space and a crew to lift the cable to flip it over.

This 1728 fiber cable is stiff, does not easily twist and only

bends in one direction because there are stiff strength members

on opposite sides of the cable. Placing it into a manhole or

vault and fitting service loops into it is not easy. In this

case, it helped to have two people and one was the trainer. You

need to have a "feel" for the cable - how it bends and twists -

to make it fit. The limits of bend radius, stiffness and

unidirectional bending makes it necessary to work carefully with

the cable to fit loops into the vault. Sometimes it's necessary

to pull a loop out and try in a different way to get it to fit.

But it can be done as you see at the right.

Pulling

the cable out of conduit in the vault without damaging it also

requires care. You can see in the back the orange duct coming

into this vault. When pulling the cable, it's important to not

kink the cable while pulling it out of a duct. A length of

stiff duct can be attached to the incoming duct to limit bend

radius. Capstans, sheeves and radius cable sheaves need to be

chosen to fit the required cable bend radius. A a radius cable

sheave with small rollers can damage the cable under tension

and are bot a good choice unless the rollers are used with a

piece of conduit to just set the bend radius.

Corning also showed us a new feature of their RocketRibbon

Cables. A high fiber count cable has a lot of fibers, even a

lot of ribbons, so identifying ribbons can be a problem. In

addition to printing data on each ribbon, Corning now tints

the ribbons with color codes to simplify identification. Great

idea.

Tight

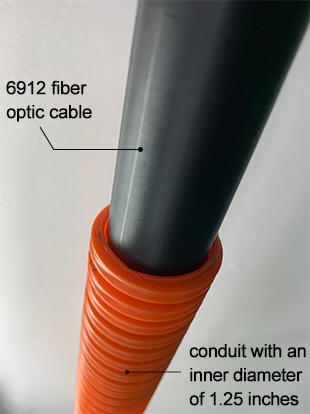

Fit: 6912 Fiber Cable Pulled in 1.25 inch Conduit

Furukawa

Electric Co., Ltd. (FEC) conducted an experiment in its Mie,

Japan facility to demonstrate the installation of a 6912-fiber

optic cable with an outer diameter of 1.14 inches (29 mm) in a

696 foot (200m) long conduit with three 90 degree curves and an

inner diameter of 32mm. The conduit used was a standard product

installed in conventional data center campuses. Engineers

confirmed a maximum pulling tension of 84 pounds (372N), well

below the maximum pulling tension of 600 pounds (2700N)

specified for the cable.

The cable was installed in a 1.25 inch (32mm) conduit with a

maximum length of 1,411 feet (430m) in a North American data

center campus in 2020 to support live traffic. The high fill

ratio in this application is not typically recommended for

Outside Plant (OSP) cable installation. However, in this

application, the end-user was willing to accept the installation

risk in return for maximum fiber density. The installation

demonstrated that FEC’s 6912 fiber optic cable can be

successfully installed into 1.25 inch (32mm) conduit using

appropriate tools, work procedures, and optimum installation

conditions.

“The FEC 6912 fiber optic cable at least doubled the fiber count

possible in a 1.25 inch conduit, compared to competing available

designs,” said Ichiro Kobayashi, General Manager of optical

fiber & cable engineering department, FEC.

Furukawa

PR also on OFS

Website. OFS is a FEC company.

Bottom Line

- High

fiber count cables allow extremely high fiber counts in

small cable sizes, perfect for dense applications in data

centers and metro areas

- With

so many fibers, ribbon splicing is the only sensible way

to splice them

- Ensure

you splicing machines can handle 200micron buffer fibers

- Because

bend radius limits are so high, they require special

consideration for installation and storage - BIG manholes

for example

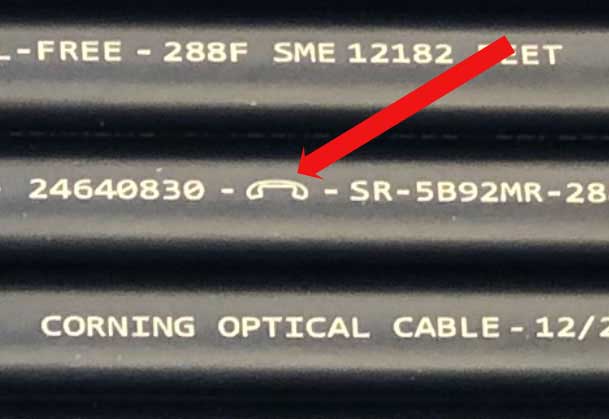

Cable

Marking Mystery

You are all

familiar with the information printed on a typical fiber optic

cable which includes the manufacturer, how many fibers in the

cable and distance markings, plus sometimes other information

like the manufacturer's part number.

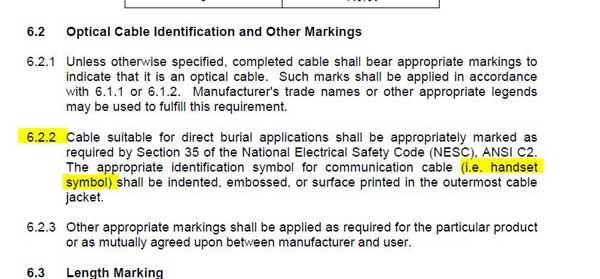

But recently two people made reference to the small symbol that

looks like an old-style telephone handset. One thought the

manufacturer of the cable used that symbol to show where the

helical winding of the buffer tubes reversed, a reference point

for preparing the cable for midspan access. Another thought it

was to indicate this was a telecom cable not a power cable.

FOA has been reaching out to people at cable companies to see if

anyone has a definitive answer as to what this symbol means, and

the answer comes from Rodney Casteel and his engineers at

Commscope.

"The handset symbol is mandatory for cables “suitable for direct

burial applications” per ICEA 640 and Telcordia GR-20. I

think this handset symbol started a long time ago so data cables

could be identified if they were dug up. My guess is the first

standard to mandate this was Telcordia GR-20 Issue 1 back in the

80s."

From ICEA-640:

And Bellcore/Telcordia GR-20:

New

Connectors

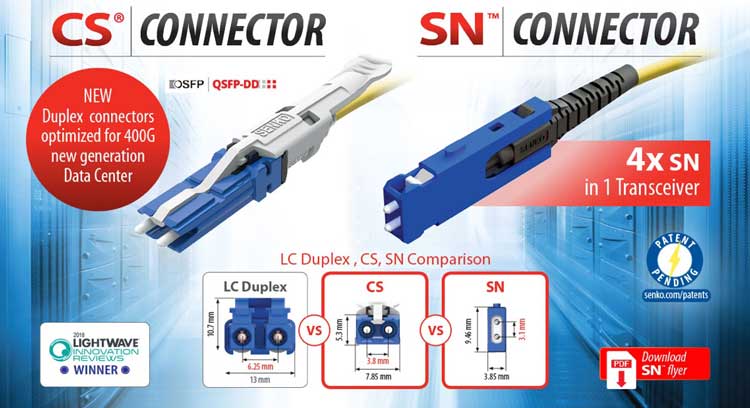

We're

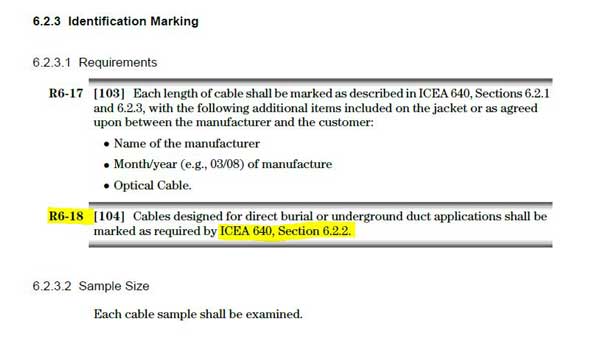

seeing some interesting new connectors being introduced. 3M

announced a multifiber array connector using expanded beam

technology and several new ideas of making a duplex connector

smaller.

3M

Expanded Beam Connector

3M

3M

Details are

sketchy but from the video on the 3M website, the connection is

made by a small plastic fixture that is shown by the arrow in

the top photo. The plastic seems to turn the beam 90 degrees so

the connection is made when two pieces overlap., in the

direction of the arrow in the lower photo. The connectors are

hermaphroditic - that is two identical connectors can mate.

There are models for singlemode and multimode fibers and you can

stack the connection modules to handle up to 144 fibers. We

understand this was not part of the 3M fiber optic product line

recently acquired by Corning. 3M

Expanded Beam Connector.

For more information on expanded beam connectors, see the FOA

Newsletter for October 2018 that discusses the R&M

QXB, another multifiber expanded beam connector announced last

Fall.

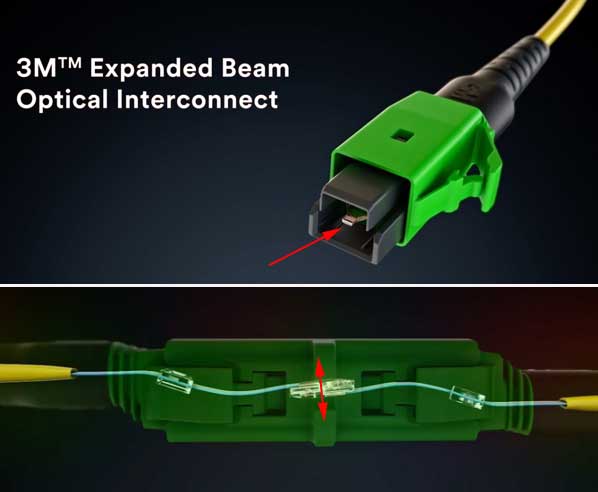

SENKO

CS and SN

In the FOA Newsletter for January 2018, we featured

the SENKO CS connector, a miniature duplex connector using two

1.25mm ferrules, but much smaller than a duplex LC. The CS is

sell on its way to becoming standardized with a FOCIS (fiber

optic connector intermateabliity standard), but on the SENKO web

page, there is another new connector, the SN, that makes the SC

look huge! The big difference is the vertical format that allows

stacking connectors very close. That can allow transceivers to

have more channels, a big plus for data centers. Here

is more information on the SENKO CS and SN connectors.

SENKO

Comparison of SENKO CS (L) and SN (R) connectors with duplex LC.

US Conec MXC

and MDC

Connectors

The R&M and 3M expanded beam multifiber connectors

reminded us that US Conec introduced the

MXC

connector over 5 years ago, using similar technology for up to

64 fibers per connector. The MXC is on the US Conec website, but

seems to be aimed at board level connections, not far off its

original purpose as a connector for silicon photonic circuits.

But when we checked the US Conec website, there was a connector

name we dis not recognize, the MDC. The MDC (below) is a

vertical format duplex connector using 1.25mm ferrules that

looks similar to the SENKO SN above. Here

is information on the US Conec MDC duplex connector.

US

Conec US

Conec

Its All About The Data Center

Just like the high fiber count cables discussed above, the CS,

SN and MDC connectors are aimed at high density cabling and

transceivers for data centers. All three are specified for the

new QSFP-DD

pluggable transceiver multi-source agreement.

Bottom Line:

- Like

everything else, connectors keep getting smaller

- Too

early to determine if they will be accepted in the

marketplace and can compete with LCs

Splice-On

Connectors

Terminating

with SC SOC in EasySplicer

Termination

has been seeing greater acceptance of the SOC - splice-on

connector - using fusion splicers. It's popularity started in

data centers for singlemode fiber where the number of

connections is very large so the cost of a fusion splicer is

readily amortized and the speed of making connections is the

real cost advantage. The performance of SOCs is much better

than prepolished/splice

(mechanical splice) connectors simply because of the

superiority of a fusion splice and the cost of the SOCs are

much less since they do not have the complex mechanical splice

in the connector.

We have

used SOCs in training and the techs take to them readily. In

classes you can combine splicing and termination in one session.

The cost of fusion splicers has been dropping to near the cost

of a prepolished/splice (mechanical splice) connector kit so the

financial decision to use SOCs is easier to make.

Bottom

Line:

- Splice-On

Connectors (SOCs) are easy to install, low loss and low

cost

- Less

hardware than pigtail splicing

- Premises

or OSP

Splice-On

Connector Manufacturers and Tradenames

7/2020

FOA Master Instructor Eric Pearson of Pearson

Technologies shared a list he has researched of

prepolished splice connectors with mechanical splices and SOC -

splice-on connectors for fusion splicing. This list shows how

widepread the availability of these connectors has become,

especially the SOCs and low cost fusion splicers.

Mechanical Splice

1. Corning Unicam® (50, 62.5, SM)

1. FIS Cheetah (???)

2. Panduit OptiCam® (50, 62.5, SM)

3. Commscope Quik II (50, 62.5, SM)

4. Cleerline SSF™ (50, SM)

5. LeGrand/Ortronics Infinium® (50, 62.5, SM)

6. 3M/Corning CrimpLok (50, 62.5, SM)

7. Leviton FastCam© (50, 62.5, SM)

Fusion Splice

2. Inno (50, 62.5, SM)

3. Corning FuseLite® (50, SM)

4. FORC (50, 62.5, SM)

5. Siemon OptiFuse ™ (SM, MM)

6. Belden OptiMax?? FiberExpress (SM, MM)

7. AFL FuseConnect® (SM, MM)

8. OFS optics EZ!Fuse ™ (50, 62.5, SM)

9. Sumitomo Lynx2 Custom Fit® (50, 62.5, SM)

10. Commscope Quik-Fuse (50, SM)

11. Ilsintech Pro, Swift® (50, 62.5, SM)

12. LeGrand/Ortronics Infinium® (50, 62.5, SM)

13. Greenlee (50, 62.5, SM)

14. Hubbell Pro (50, SM)

15. Easysplicer (SM)

Note: There are additional manufacturers from the Peoples

Republic of China, which advertise on Amazon and eBay.

Installation

Midspan

Access - Simplifying Installation Of Drops

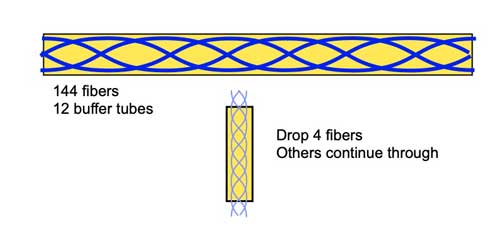

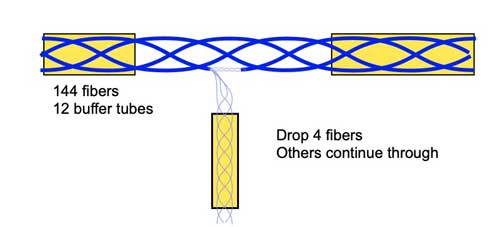

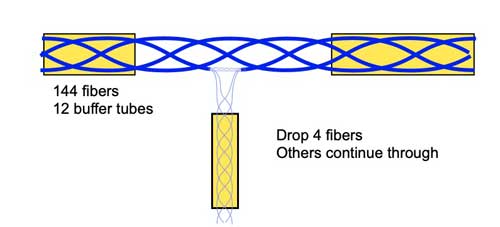

Many

installations involve dropping a small fiber count cable from a

large backbone cable. Backbone cables of 144-288 fibers are

common and larger ones are becoming more common too. Drop cables

are often only 2-14 fibers, meaning most fibers are continuing

straight through the drop point. Midspan access involves opening

the cable by removing the jacket and strength members, opening

the buffer tube and splicing only the fibers being dropped at

that point. The untouched buffer tubes from the opened cable are

carefully rolled up and stored in the same splice closure as the

fibers that will be separated and spliced to a drop cable.

If there is a method of splicing only the 4 drop fibers instead

of the 144 fibers, we will only have 4 splices instead of 144 or

146 depending on the architecture of our system. The difference

is according to how the drop is configured.

If you are building a star network where every drop links back

to the origin of the network, you will splice 4 fibers in the

cable to the drop cable, leaving 4 splices on 4 fibers (instead

of 144 splices if the backbone cable is cut and respliced.

If you are building a ring network, you may only be splicing two

fibers going to the drop and two others that are continuing

along the ring network.

All this may seem obvious but in actual practice requires some

knowledge, skills and careful workmanship. To do a proper job.

Fortunately, manufacturers of cables and tools have good

information available online on how to do it, and FOA Master

Instructor Joe Botha has provided FOA with a application

note on how midspan access is done in his classes also.

The basic process is simple. We will look at a loose tube cable

but processes exist for ribbon cables also. You remove the

jacket of the cable for a specified length according to the

cable type and splice closures used. After removing the cable

jacket, you remove unnecessary strength members, leaving enough

of the stiff central member on both ends to attach to the splice

closure. Identify the tube with the fibers to be spliced to the

drop cable and set aside while carefully coiling the other tubes

for storage in the closure.

To open the buffer tube, you need a midspan access tube that

shaves off a section of the tube to allow removal of the fibers

without damaging them. Here two types of Miller tools that shave

the tube:

After

shaving the tube and removing the fibers - count carefully to

ensure you remove all the fibers! - you can cut the tube off to

have bare fibers only for the length you need to splice on the

drop cable. All these fibers will be placed in a splice tray for

safe storage but only the fibers being dropped will be cut and

spliced to the drop cable. This is what the closure will look

like, ready for splicing the drop cable.

In the case

of the particular user who contacted us, not every drop would

use midspan access. His cable plant was 15miles (25km) long with

roughly 17 locations where cable drops were needed. The cable he

was using could only be made in 5km lengths, so there would have

to be several locations where the cable would be spliced in the

25km run.

The design would need to carefully determine how much cable was

needed along each section of the route, including lengths for

service loops and midspan access or splicing, to determine which

drop points would be using midspan access ans which would be

used as splice points for the entire cable.

That's why fiber optic network design is important but sometimes

complicated.

Search online for "midspan access" to find lots of application

notes and videos on the subject. Or talk to your fiber optic

cable vendors.

FOA

Guide Page on Midspan Access

Nanotrenching

Failure In Louisville, KY

Google

FIber tried a new way to install cable in Louisville, KY,

that turned out to be a very expensive failure.

Nanotrenching is what some call very shallow trenching for

installing fiber optic cable - see the photo below - and

filling with rubber cement. It did not work.

Chris

Otts, WDRB Louisville,

Feb 7, 2019

LOUISVILLE, Ky. (WDRB) – Google Fiber is leaving Louisville

only about a year after it began offering its superfast

Internet service to a few neighborhoods, citing problems

with the method it used to build the network through shallow

trenches in city streets.

The shut off will happen April 15, said Google Fiber, a unit

of Silicon Valley tech giant Alphabet, in a blog post

Thursday.

Google Fiber has served about a dozen cities, and Louisville

is the first it has abandoned."

Shortly after Google announced Louisville as a possible

location in 2015, the Metro Council passed a utility pole

ordinance at Google’s behest, then spent $382,328 on outside

lawyers to defend the ordinance in lawsuits from AT&T

and the cable company now called Spectrum.

Mayor Greg Fischer said in early 2016 that Louisville’s

landing Google Fiber was “huge signal to the world.”

Louisville’s public works department allowed Google Fiber to

try a new approach to running fiber – cutting shallow

trenches into the pavement of city streets to bury cables.

It led to a lot of problems, including sealant that popped

out of the trenches and snaked over the roadways.

Louisville street, Copyright

2019 WDRB Media. Reproduced with permission.

It feels like you are using us for a

science-fair experiment,” Greg Winn, an architect who lives

on Boulevard Napolean, told Google Fiber representatives

during a Belknap Neighborhood Association meeting last year.

“…Our streets look awful.”

Google Fiber would go on to fill in the trenches with

asphalt, what company executives said was like filling a

60-mile long pothole.

Google Fiber never ended up using the utility pole law -- a

policy called One Touch Make Ready -- that Louisville passed

at its behest, as the company only buried its wires instead

of attaching them to poles.

A public relations representative for Google Fiber said no

one was available for an interview.

In written responses, the spokesman said Google Fiber

initially chose not to use the utility pole access because

of "uncertainty" about whether the ordinance would hold up.

Now that it has cut trenches in the streets, the company has

no desire to start over.

Even using (One Touch Make Ready), we’d need to start from

scratch, and that’s just not feasible as a business

decision," the spokesman said.

FOA:

Be sure to watch the video from WDRB.

Copyright 2019 WDRB Media. Reproduced with permission.

Bottom

Line:

- Before

you try some new idea, ask some experienced installers

what they think

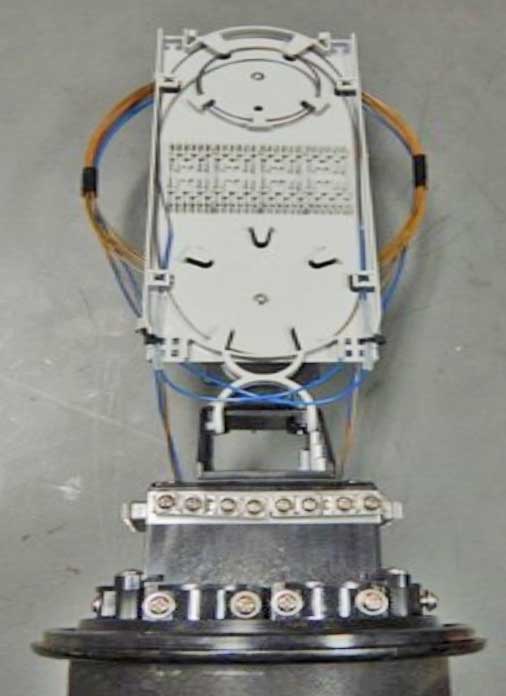

Manhole/Handhole

Size

Q: What you

recommend when it comes to manjole/handhole sizing. If

they are being used for splicing, do you have a general formula

of length of splice closure plus X factor more for cables in/out

of closure and slack storage?

A:

FOA has been doing some research on underground construction to

expand our section in the FOA

Guide. We are looking at what people are specifying on

some projects since we do not know of any industry standards.

There are links of some interesting/useful information below.

From our standpoint, the minimum size would be determined by the

bend radius of the fiber optic cable (see

article above), how much slack (service loops) would be

stored (slack from how many cables - see photo below) size of

the splice closures, and how many ducts and cables would be

served. Generally you will have 20-30 feet in service loops to

allow for splicing, Typical cable up to 1/2” needs a loop

>20” but I don’t know how you would ever get a loop that

small for that much cable, so you probably have minimum 2’

loops, at least 5 coils. Add a closure and you probably need a

2’X4’ handhole, at least 2’ deep, as a minimum - see the “good”

photo below.

We were at Corning training last Spring on high fiber count

cables and those cables require ~6’ X 4” min manholes just to

fit the loops of cable. Handholes can be smaller, depending on

the type of splice or drop, midspan access, etc.

The FOA

Guide pages on OSP Construction created by Joe Botha for

his course in South Africa talks about manholes and handholes on

this

page near then end.

This Jensen

web page shows the number of different designs and sizes.

Detail from Central

FL Expressway Design Standards offers several sizes: 4' X

4' X 4', 4' X 6.5' X 6.5', 4' X 6.5' X 6.5' and specifies

a duct organizer.

Here is a Wisconsin

DOT spec for a 4’ diameter manhole.

NYC

Broadband General Network Specifications: see page

24ff

Bottom

Line:

- Manholes

need to be big enough for the cables they must contain

- They

usually aren't!

Installation

- Cleaning

Bad

Advice

Our inbox

recently had a message with this thought:

"It is time for spring cleaning, and we don't mean just at

home. Contaminated fiber end faces remain the number one cause

of fiber related problems and test failures. With more

stringent loss budgets, higher data speeds and new multifiber

connectors, proactively inspecting and cleaning will help you

ensure network uptime, performance, and reliability. Despite

"everyone" knowing this, fiber contamination and cleaning

generates a lot of failed test results."

Well, experience tells us that "proactively inspecting and

cleaning" can generate a lot of damage to operating fiber

optic networks.

Inspection and cleaning should be done whenever a fiber optic

connection is opened or made, of course. But the act of opening

the connection exposes it to airborne dirt and the possibility

of damage if the tech is not experienced in proper cleaning.

Fiber optic connections are well sealed and if they are clean

when connected, they will not get dirty sitting there. Fiber

optic connections do not accumulate unseen dirt like under your

bed or sofa, requiring periodic cleaning, as implied in this

email.

Clean 'em, inspect 'em to ensure proper cleaning, connect 'em

and LEAVE THEM ALONE!!!

And, duh, remember to put dust caps on connectors AND

receptacles on patch panels when no connections are made

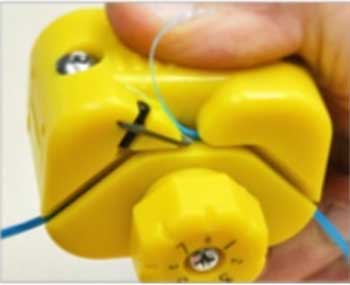

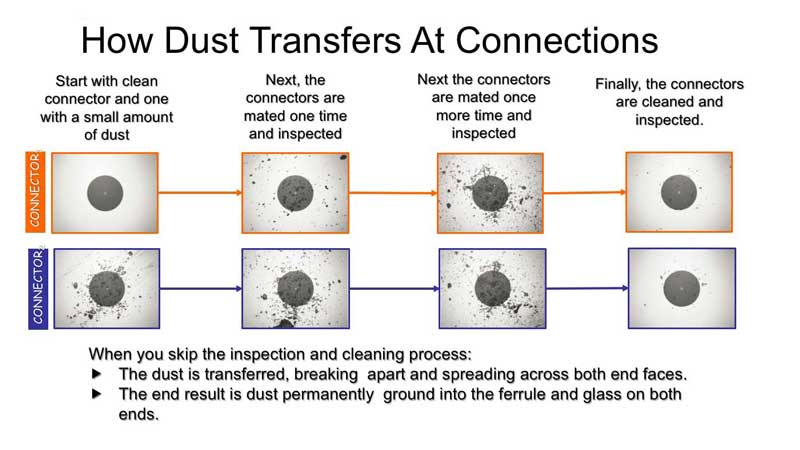

Was

this perhaps another early April Fools' joke...like this one

we ran several years ago about the wrong way to clean

connectors:

Why You

Clean Connectors Before You Make Connections

Brian

Teague of Microcare/Sticklers

send us this series of photos showing what happens when you make

connections with dirty connectors. It speak for itself!

How To

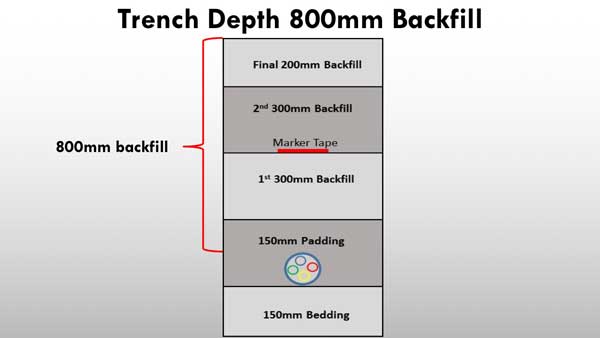

Backfill A Trench For Underground Construction

Here's the

answer to a question we've gotten. Where did we find the answer?

In the new FOA

Guide section on OSP Construction developed using Joe

Botha's OSP Construction Guide which is published by the FOA.

Joe's book covers underground and aerial installation from a

construction point of view, covering material after the FOA's

design material and before you get into the FOA's information on

splicing, termination and testing.

DO NOT FORGET THE MARKER TAPE! It makes the cable easy to locate

and hopefully prevent a dig-up.

The 2019 update of the FOA

Reference Guide To Outside Plant Fiber Optics contains

this and lots of other new material on OSP construction.

FCC

Adopts One Touch Make Ready (OTMR) Rules For Utility Poles

On August

3, The US Federal Communications Communications Commission

adopted a new rule that allows "one-touch make-ready" (OTMR) for

the attachment of new aerial cables to utility poles. From the FCC

explanation of the rule, "the new attacher (sic) may opt

to perform all work to prepare a pole for a new attachment. OTMR

should accelerate broadband deployment and reduce costs by

allowing the party with the strongest incentive to prepare the

pole to efficiently perform the work itself."

You may remember that FOA has reported on the "Pole

Wars" for several years. Battles over making poles

available and/or ready for additional cable installation has

been slowing broadband installations for years and now threatens

upgrading cellular service to small cells and 5G in many areas.

Is OTMR

A Good Idea?

OTMR has

the potential to speed deployment of new communications networks

if handled properly. However, one hopes the installers doing

OTMR know what they are doing. We've heard so many horror

stories about botched installations, cut fiber and power cables,

punctured water mains and gas lines done by inept contractors

that we just hope this doesn't cause even more trouble.

For example, here are 2 poles in the LA area where small cells

are being installed. Can just any contractor handle OTMR on

these poles?

Bottom Line

- OTMR

may be problematic if contractors doing installation are

not competent

Fiber

Optic Testing

Test

Sources For Multimode Fiber Testing

One of

our schools recently asked up for recommendations on test

sources for multimode fiber, wondering if the sources should

be a LED or laser. Multimode test sources are always LEDs and

these sources should be always used with a mode conditioner,

usually a mandrel wrap. See here.

This is how all standards for testing multimode fiber require

test sources.

Years

ago, as systems got faster and LEDs were too slow at speeds

above a few hundred Mb/s. Fortunately 850nm VCSELs were

invented to provide the solution for faster transmitters. But

VCSELs were not good for test sources. They had variable mode

fill and modal noise, so testers continued using LEDs for test

sources, but with mode conditioners like the mandrel wrap that

filtered out higher order modes to simulate the mode fill of

an ideal VCSEL

The bigger issue with MM fiber is whether to test at both 850

and 1300nm. In the past, we did both because there were

systems that used 1300nm LEDs or Fabry-Perot lasers for

sources because the fiber attenuation was lower at 1300nm than

850nm. As network speeds increased to 1Gb/s and above,

bandwidth became the limiting factor for distance, not

attenuation. VCSELs only worked at 850nm and all systems

in MM basically have been switched to 850nm VCSELs.

We also used to test at both wavelengths because if a fiber

was stressed, the bending losses were higher at 1300nm, so you

could determine if a fiber had problems with stress. But since

MM fiber has all gone to bend-insensitive fiber, that no

longer works and the need or reason to test at 1300nm went

away. It has not been purged from all standards yet however.

To complicate things, standards say that you should not use

bend-insensitive fiber for test cables (launch or receiver

reference cables) because they modify modal distribution, but

it’s a moot point - practically all MM fiber is

bend-insensitive so you have no choice but to use it. And most

links will have BI to BI connections that should be tested.

But we checked with some technical contacts and there are no

specifications for BI fiber mandrels as mode conditioners.

Best

solution - 850 LED with a mode conditioner on non-BI fiber (if

you can find it - see above).

Bottom

Line

- Multimode

fiber needs testing with a 850nm LED source

Power

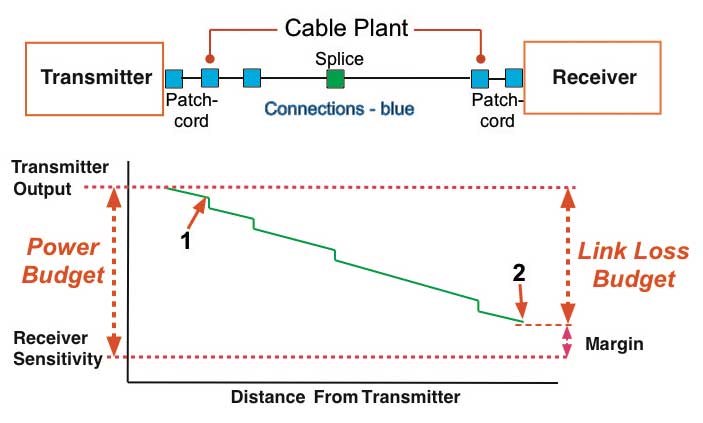

Budgets and Loss Budgets

Not only

was this topic a long discussion with our new instructors but

it's a common question asked of the FOA - we received two

inquiries on loss budgets in the last month alone. The confusion

starts with the difference between a power budget and a loss

budget, so we'll start there. and we'll include the points where

we were stopped to explain things.

What's The Difference Between Power Budget And Loss Budget?

- A

power budget is the amount of loss the link electronics can

tolerate - transmitter to receiver. You use this to compare

to the cable plant link loss budget when designing a cable

plant to ensure the link will work on the cable plant

design.

- The

link loss budget is the estimated loss of the fiber optic

cable plant including the loss of the fiber, splices and

connections. You compare that to the power budget to ensure

the link will work on the cable plant being designed, then

again after installation to compare to test results.

Consider this diagram:

At the top of the diagram above is a fiber optic link with a

transmitter connected to a cable plant with a patchcord. The

cable plant has 1 intermediate connection and 1 splice plus, of

course, "connectors" on each end which become "connections" when

the transmitter and receiver patchcords are connected. At the

receiver end, a patchcord connects the cable plant to the

receiver.

Definition: Connection: A connector is the hardware attached

to the end of a fiber which allows it to be connected to

another fiber or a transmitter or receiver. When two

connectors are mated to join two fibers, usually requiring a

mating adapter, it is called a connection. Connections have

losses - connectors do not.

Below the drawing of the fiber optic link is a graph of the

power in the link over the length of the link. The

vertical scale (Y) is optical power at the distance from the

transmitter shown in the horizontal (X) scale. As optical signal

from the transmitter travels down the fiber, the fiber

attenuation and losses in connections and splice reduces the

power as shown in the green graph of the power.

Comment: That looks like an OTDR trace. Of course. The

OTDR sends a test pulse down the fiber and backscatter allows

the OTDR to convert that into a snapshot of what happens to a

pulse going down the fiber. The power in the test pulse is

diminished by the attenuation of the fiber and the loss in

connectors and splices. In our drawing, we don't see reflectance

peaks but that additional loss is included in the loss of the

connector.

Power Budget: On the left side of the graph, we show the

power coupled from the transmitter into its patchcord, measured

at point #1 and the attenuated signal at the end of the

patchcord connected to the receiver shown at point #2. We also

show the receiver sensitivity, the minimum power required for

the transmitter and receiver to send error-free data. The

difference between the transmitter output and the receiver

sensitivity is the Power Budget. Expressed in dB, the

power budget is the amount of loss the link can tolerate and

still work properly - to

send error-free data.

Link Loss: The difference between the transmitter

output (point #1) and the receiver power at its input (point

#2) is the actual loss of the cable plant experienced by the

fiber optic data link.

Comment: That sounds like what was called "insertion

loss" with a test source and power meter. Exactly! Replace

"transmitter" with test source, "receiver" with power meter

and "patchcords" with reference test cables and you have the

diagram for insertion loss testing which we do on every

cable.

The

loss of the cable plant is what we estimate when we

calculate a "Link Loss Budget" for the cable plant, adding

up losses due to fiber attenuation, splice losses and

connector losses. And sometimes we add splitters or other

passive devices.

Margin: The margin of a link is the difference between

the Power Budget and the Loss of the cable plant.

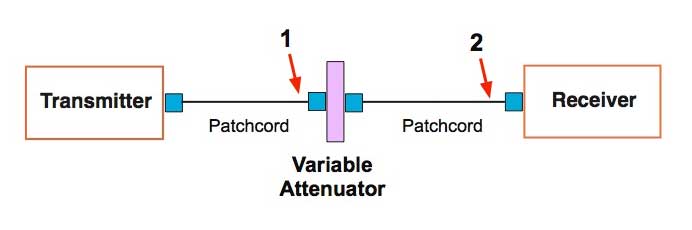

Determining The Power Budget For A Link

Question: How is the power budget determined? Well, you

test the link under operating conditions and insert loss while

watching the data transmission quality. The test setup is like

this:

Connect the transmitter and receiver with patchcords to a

variable attenuator. Increase attenuation until you see the link

has a high bit-error rate (BER for digital links) or poor

signal-to-noise ratio (SNR for analog links). By measuring the

output of the transmitter patchcord (point #1) and the output of

the receiver patchcord (point #2), you can determine the maximum

loss of the link and the maximum power the receiver can tolerate.

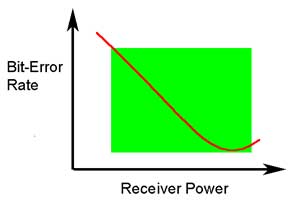

From this

test you can generate a graph that looks like this:

A receiver must have enough power to have a low BER (or high

SNR, the inverse of BER) but not so much it overloads and signal

distortion affects transmission. We show it as a function of

receiver power here but knowing transmitter output, this curve

can be translated to loss - you need low enough loss in the

cable plant to have good transmission but with low loss the

receiver may overload, so you add an attenuator at the receiver

to get the loss up to an acceptable level.

You must realize that not all transmitters have the same power

output nor do receivers have the same sensitivity, so you test

several (often many) to get an idea of the variability of the

devices. Depending on the point of view of the manufacturer, you

generally error on the conservative side so that your likelihood

of providing a customer with a pair of devices that do not work

is low. It's easier that way.

The

Perils Of 2-Cable Referencing

FOA

received an inquiry about fluctuations in insertion loss

testing. The installer was using a two cable reference

method for setting a "0dB" reference where you attach one

reference cable to the source, another to the meter and

connect them to set the "0dB" reference. The 2-cable

reference method is allowed by most insertion loss testing

standards, along with the 1- and 3- cable reference

methods, although each gives a different loss value.

3 different ways to set a 0dB reference for loss testing

When a 1-cable reference is used, one sets a reference

value at the output of the launch cable and measures the

total loss. With a 2-cable reference, a connection between

the launch and receive reference cables is included in

making the reference, so the loss value measured will be

lower by the amount of that connection loss. The 3-cable

reference includes two connection losses so the loss will

be lower still.

The problem with the two cable reference is the

uncertainty added by including the connection between the

two reference cables when setting the "0dB"

reference.

Unless you carefully inspect and clean the two connectors

and check the loss of that connection before setting the "0dB"

reference, you add a large amount of uncertainty to

measurements of loss. The best way to use a 2-cable

reference is to set up the source and reference cable

(with inspected and cleaned connectors), measure the

output of the launch cable, attach the receive cable (with

inspected and cleaned connectors) and measure the loss of

the connection before setting the "0dB"

reference. If the connection loss is not less than 0.5dB,

you have connectors that should not be used for testing

other cables. Find better reference cables.

The two cable reference is often used when the connectors

on the cables or cable plant being tested are not

compatible with the connectors on the test equipment, so

you must use hybrid launch and receive cables. Then you

can only reference the cable when connected to each other.

In that case, you need the 2-cable reference but should

expect lower loss and higher measurement uncertainty.

Experiments have shown that the uncertainty with a 1-cable

reference is around +/-0.05dB while the 2-cable has an

uncertainty of around +/-0.2 to 0.25dB caused by the

mating connection between the two reference cables. Those

experiments also showed the uncertainty of the 3-cable

reference was not significantly larger than the 2-cable

reference.

When possible, use a 1-cable reference. When you must use

the 2- or 3-cable reference, inspect and clean all

connectors carefully before making connections for the

reference or test.

Bottom Line:

- The

value of loss you measure depends on how you set

your "0dB" reference - more reference cables means

less loss.

- Connections

between reference cables when setting a 0dB loss add

uncertainty to measurements

More information: 5 ways to test a fiber optic cable plant

Safety

On The Job

Safety is

the most important part of any job. Installers need to

understand the safety issues to be safe. An excellent guide to

analyzing job hazards is from OSHA, the US Occupational Safety

and Health Administration. Here

is a link to their guide for job hazard analysis.

|